Published in ICML 2021

Abstract

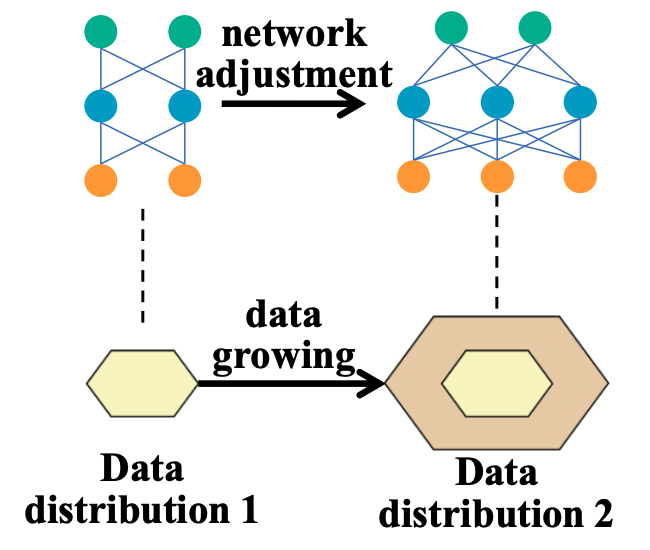

In real-world applications, data often come in a growing manner, where the data volume or the number of classes may increase dynamically. This will bring a critical challenge for learning, given the increasing data volume or the number of classes, one has to instantaneously adjust the neural model capacity to obtain promising performance. Existing methods either ignore the growing nature of data or seek to independently search an optimal architecture for a given dataset, and thus are incapable of promptly adjusting the architectures for the changed data. To address this, we present a neural architecture adaptation method, namely AdaXpert, to efficiently adjust previous architectures on the growing data. Specifically, we introduce an architecture adjuster to generate a suitable architecture for each data snapshot, based on the previous architecture and the data difference extent between current and previous data. Furthermore, we propose an adaptation condition to determine the necessity of architecture adjustment, thereby avoiding unnecessary and time-consuming adjustments. Extensive experiments on two data growth scenarios (increasing data volume and number of classes) demonstrate the effectiveness of the proposed method.